My Neuroscience and

Neural Engineering Projects

I am driven to understand how the brain makes us who we are and lets us do the things we do. I am lucky enough to have had the opportunity to study the brain directly at The University of Pittsburgh in Dr. Andrew Schwartz's Motorlab. The Motorlab was one of the first to accomplish direct control of a computer cursor with neural signals, and has consistently pushed the field of brain-machine interfaces forward, achieving the first functional robotic neural prosthetic arm controlled in real time by a monkey's brain signals, as seen in this video from 2008:

Later they would demonstrate world-first 7-D robotic neural prosthetic control by a monkey and 10-D neural prosthetic control by a paralyzed human. These successes were only possible because of a deep scientific understanding of how the brain controls movement in healthy subjects. Thus the Motorlab also conducts many basic motor neuroscience experiments in order to inform future brain-machine interface efforts.

My work has been focused on understanding how networks of neurons in multiple frontoparietal areas enable us to interact with objects in the world, and how the characteristics of the object-related activity in these areas will inform the design of future brain-machine interfaces that can restore the ability to grasp, manipulate and use objects. I've also studied the changes that occur in the brain as a monkey learns to control a computer cursor or robot with their mind. My approach has frequently involved the application of engineering principles and skills that I learned in my undergraduate training in Mechanical Engineering. For instance, I used my knowledge in computer aided design and 3D printing to design a guidance system for use during electrode implant neurosurgery, enabling us to record from hundreds of neurons in multiple brain areas simultaneously. I've also employed mathematical and statistical tools from engineering to describe, model and analyze the data from these hundreds of neurons.

Below are some brief descriptions of some of my projects:

My work has been focused on understanding how networks of neurons in multiple frontoparietal areas enable us to interact with objects in the world, and how the characteristics of the object-related activity in these areas will inform the design of future brain-machine interfaces that can restore the ability to grasp, manipulate and use objects. I've also studied the changes that occur in the brain as a monkey learns to control a computer cursor or robot with their mind. My approach has frequently involved the application of engineering principles and skills that I learned in my undergraduate training in Mechanical Engineering. For instance, I used my knowledge in computer aided design and 3D printing to design a guidance system for use during electrode implant neurosurgery, enabling us to record from hundreds of neurons in multiple brain areas simultaneously. I've also employed mathematical and statistical tools from engineering to describe, model and analyze the data from these hundreds of neurons.

Below are some brief descriptions of some of my projects:

- Cortical Encoding of Object Presence

- Cortical Encoding and Learning of Object Affordances

- Electrode Array Implant Guidance System

- Contextual Object information in M1

- Engineered Learning for Robotic Neural Prosthetic Control

- Neural Signatures of Long Timescale Learning in a Brain-Computer Interface (coming soon!)

- Biomimetic Robotic Lamprey (coming soon!)

Cortical Encoding of Object Presence

(in progress)

This experiment was designed to directly address issues encountered in the human neural prosthetic studies here at Pitt. They found that, though the subject could move the arm around in space with relative ease, when it came to grasping a real physical object, the robot did not behave as desired, as seen below:

video from: Downey, John E., et al. "Motor cortical activity changes during neuroprosthetic-controlled object interaction." Scientific reports 7.1 (2017): 16947.

This suggests that there may be some "extra" signal in the brain that activates when our movement is directed toward an object. Our brain decoders don't currently account for this signal.

My goal was to look for this object-related signal in a healthy monkey. To do this, I created a new task in which the monkey would:

My goal was to look for this object-related signal in a healthy monkey. To do this, I created a new task in which the monkey would:

- Reach to grasp an object

- Reach into empty space where the object was

- Reach toward the object but not grasp it

My approach was to reduce the neural variance due to movement differences by enforcing extremely similar movements with or without an object present. Thus, any remaining variance in the neural signal should reflect the cognitive signal of knowing an object is present in the workspace.

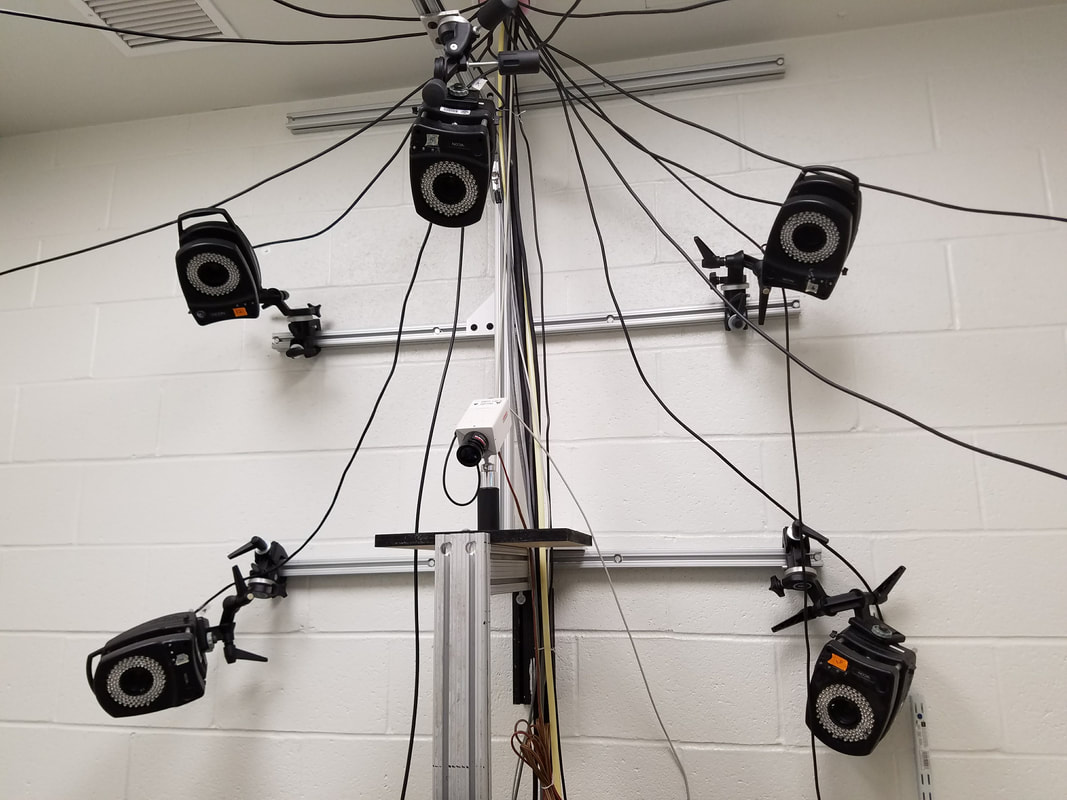

Getting the monkeys to successfully execute these behaviors took a lot of careful training and planning. For the conditions without grasping, I had to find a way to track the position of the hand in real time. To do this, I used a Vicon infrared motion tracking system

|

The Vicon system uses an array of cameras to track reflective markers affixed to the body. It's the same technology that is used to capture movements for CGI in movies and video games.

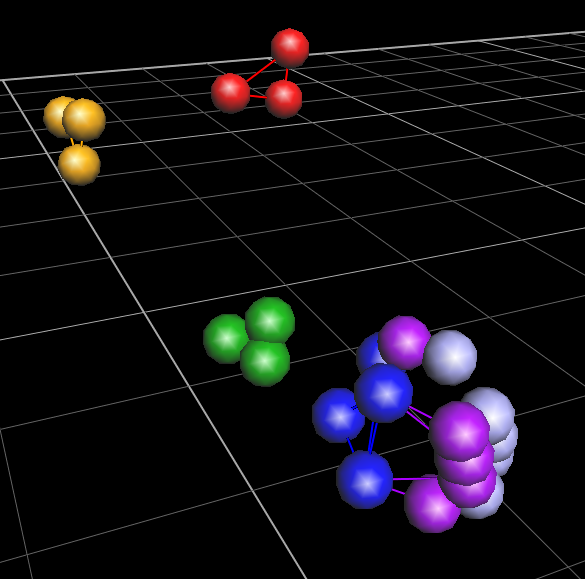

With some fine-tuning and custom software, I was able to use this system to stream the position of the monkey's hand in real time, to detect when he had made a successful reach. In the graphic below, the red markers are strapped on the torso, orange markers on the upper arm, green markers on the forearm, blue markers on the hand, purple markers on the proximal finger joints and lilac markers on the distal finger joints. |

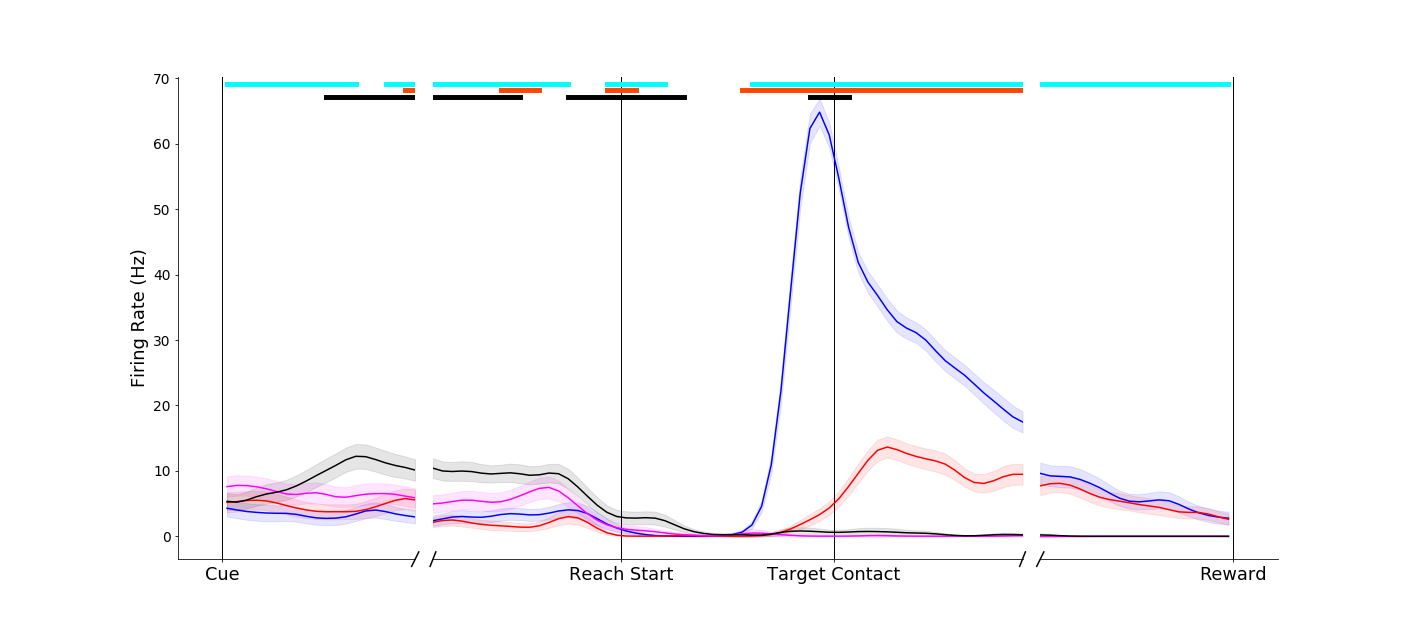

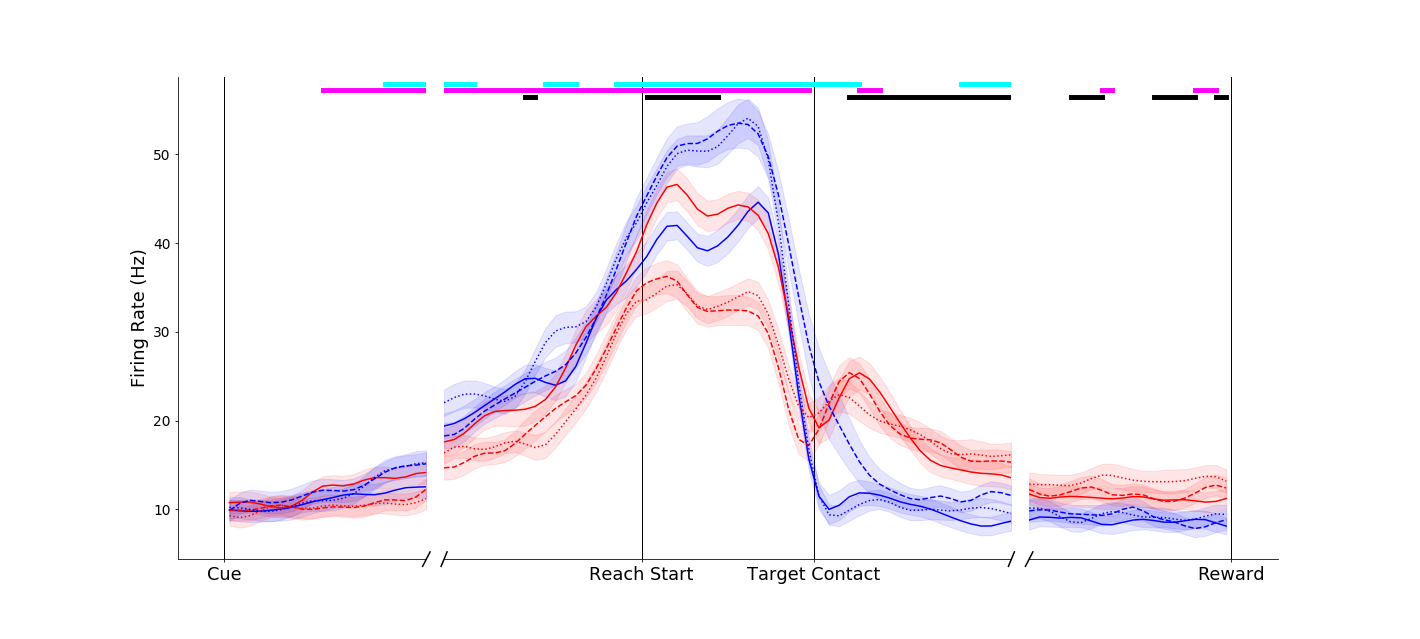

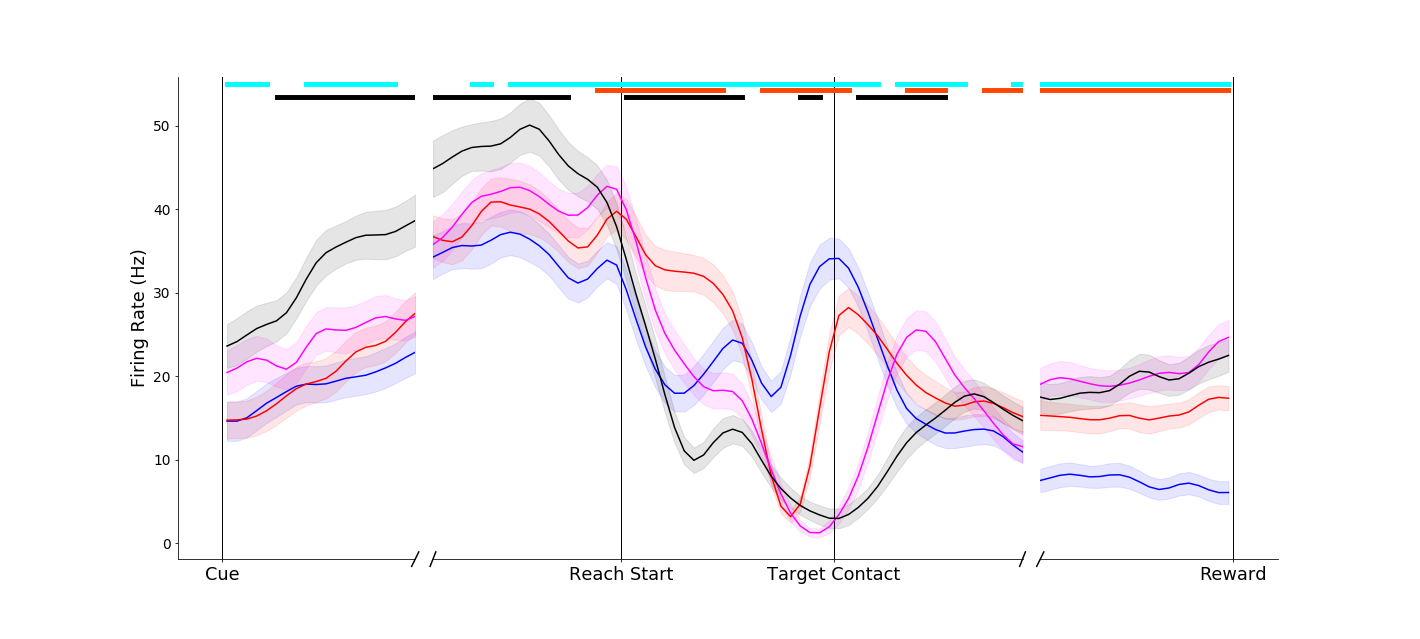

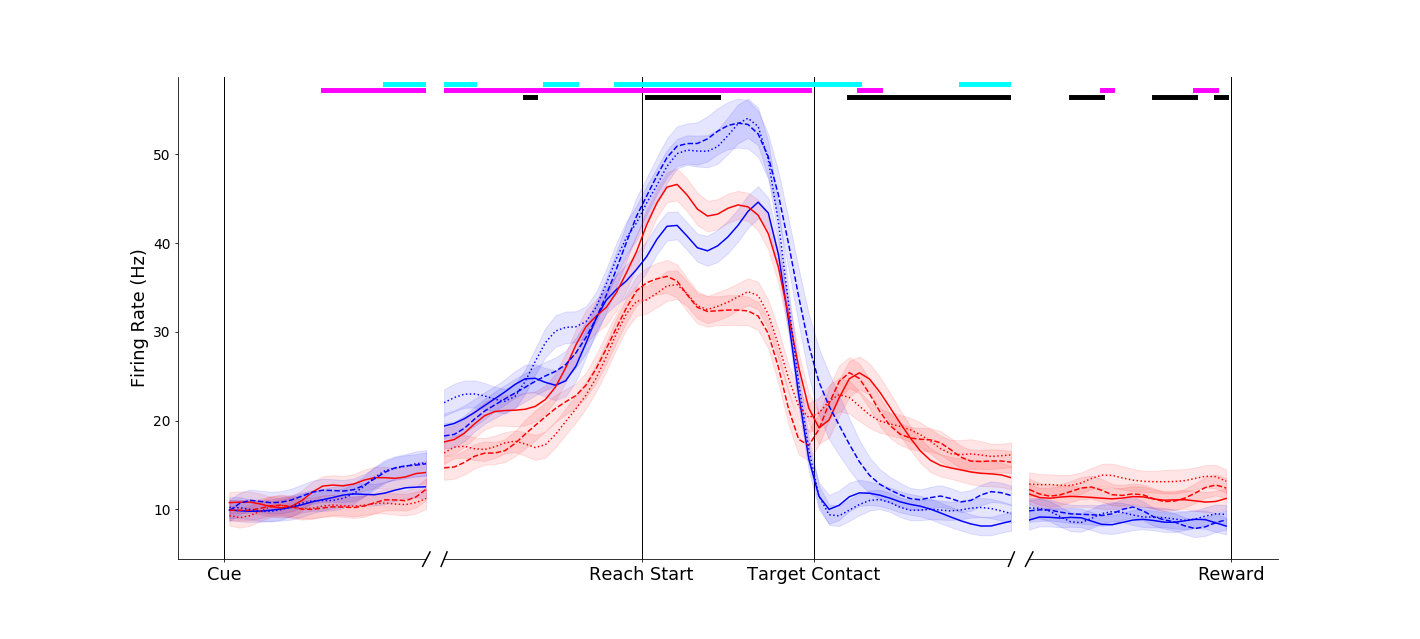

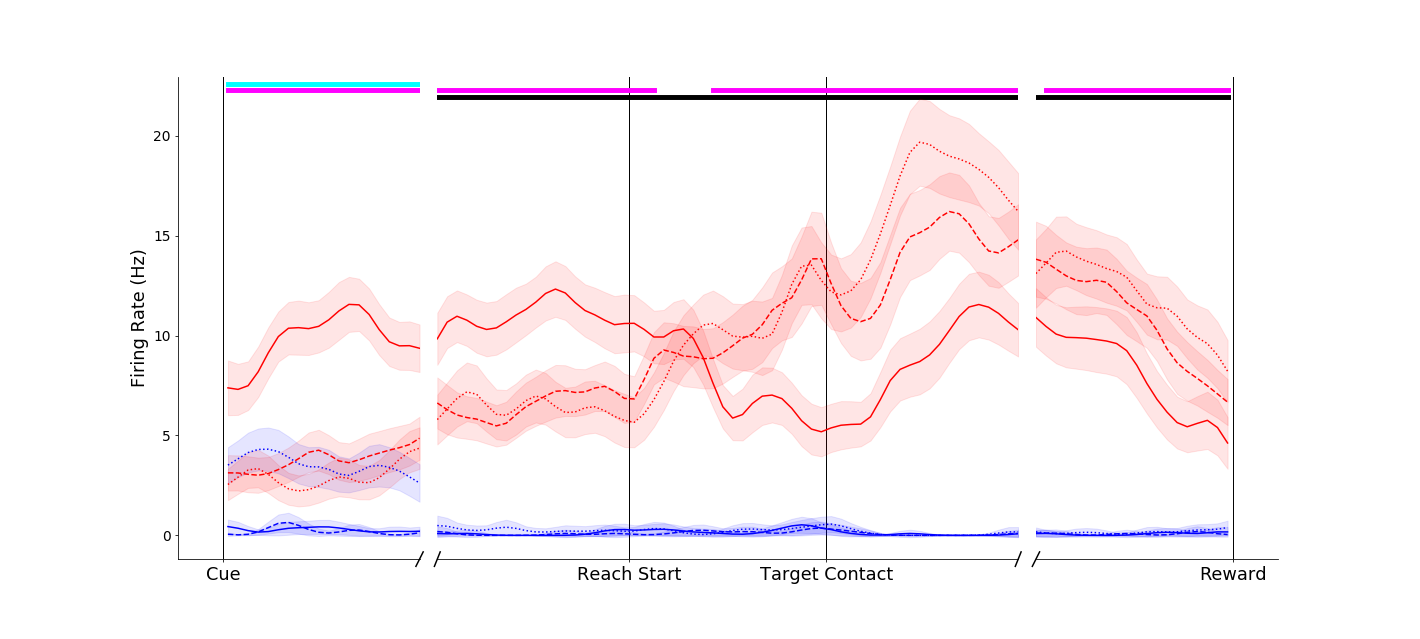

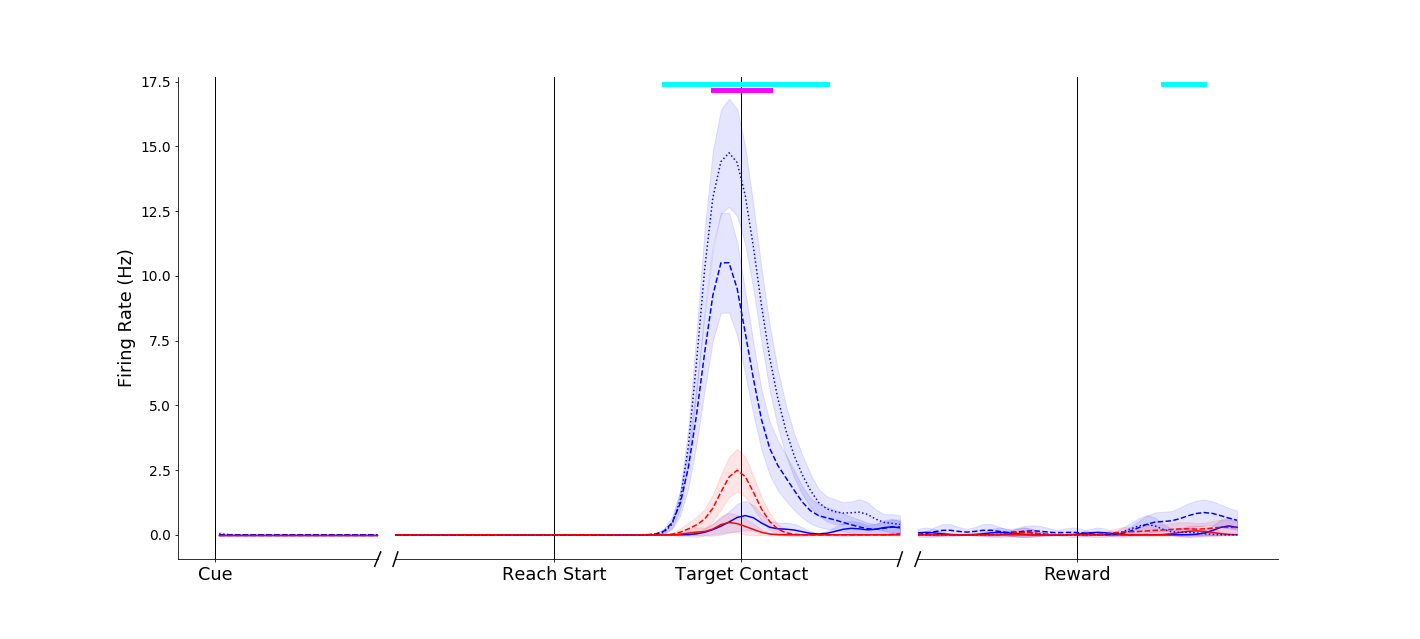

I recorded neural spiking activity in primary motor cortex, ventral premotor cortex and anterior intraparietal area during this task. I'm currently analyzing this data, but early results are extremely exciting! Strong object-presence effects can be seen in both single neurons and population activity in all three brain areas. Below are some preliminary single neuron firing rate plots. To orient you:

- Trial-averaged firing rates are plotted against time.

- The first tick on the x-axis is when the reach cue is delivered

- The second tick on the x-axis is when the reaching movement starts

- The third tick on the x-axis is when the reaching movement ends (object contact or goal position achieved)

- The final tick on the x-axis is when the reward is delivered

- Blue traces denote power grasp trials

- Red traces denote pinch grasp trials

- Magenta traces denote reaches toward the object without grasping it

- Black traces denote reaches into empty space

|

A primary motor cortex neuron that reflects a classical understanding of motor cortex as a simple motor controller - the two conditions without grasp (black and magenta traces) evoke similar firing rates. In addition, the two different grasps (pinch, red; power, blue) evoke different firing rates. Of note, however, is a pre-movement object-presence effect (black trace is separate from the others on the left-hand side of the plot).

|

|

Another primary motor cortex neuron, this one displaying a dramatic object-presence effect. The reach-without-grasp condition (magenta trace) is markedly different from the reach-into-space condition (black trace), even though these two conditions entail very similar movements. A high percentage of neurons display effects like this!

|

|

The object-presence effect is frequently even more dramatic in premotor and parietal cortex. Take for example this neuron from anterior intraparietal cortex. It is almost completely silent when an object is present, but engaged when reaching into space. This is despite all of the conditions requiring very similar arm movements!

|

These plots are just a very early peek at what these intriguing data can tell us. I am currently actively exploring these data to analyze the timing, prevalence and characteristics of this object-presence effect. I will also be looking at information flow between the three brain regions I recorded from, and how this flow may be gated by the presence of an object. I'll have more results soon, so stay (cosine) tuned! This work will be submitted for publication in a science journal.

Encoding and Learning of Object Affordances

(in progress)

When you see a familiar object or tool, you are immediately aware of what it is - that is, how it feels to grasp and what it can be used for. With this project, I sought out to find where and how this knowledge might be represented in our brains.

Affordances are the actions that can be performed on or with an object. Thus affordances depend both on the properties of the object itself (it's shape and material) and on our own learned capabilities. Take for instance a plate. It affords grasping on the edge, and perhaps throwing like a frisbee. Now imagine that you learn how to spin the plate on your finger. Now the plate has a new affordance, that of spinning, though the plate itself didn't change!

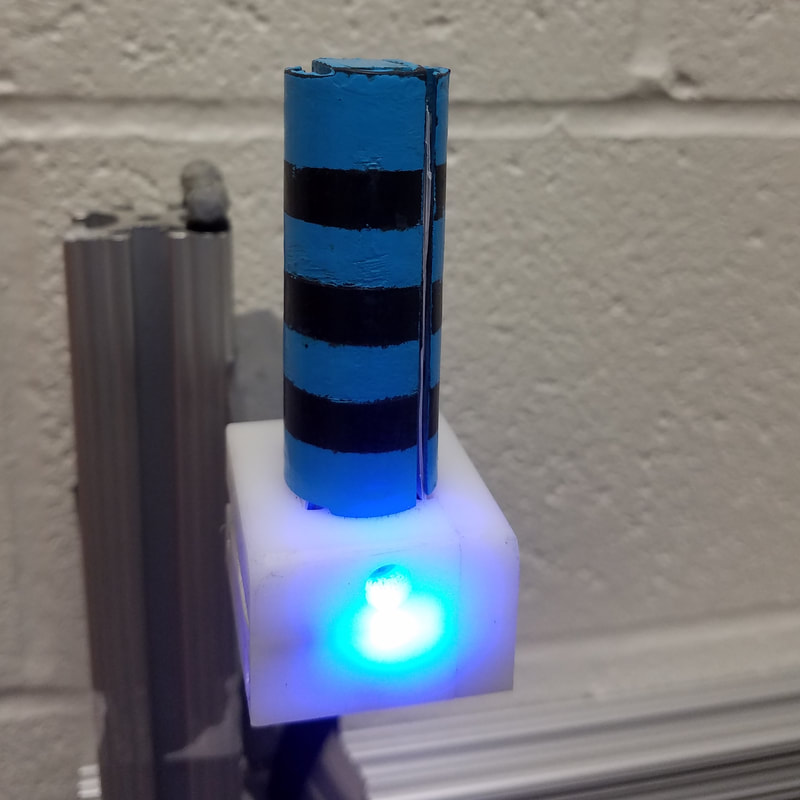

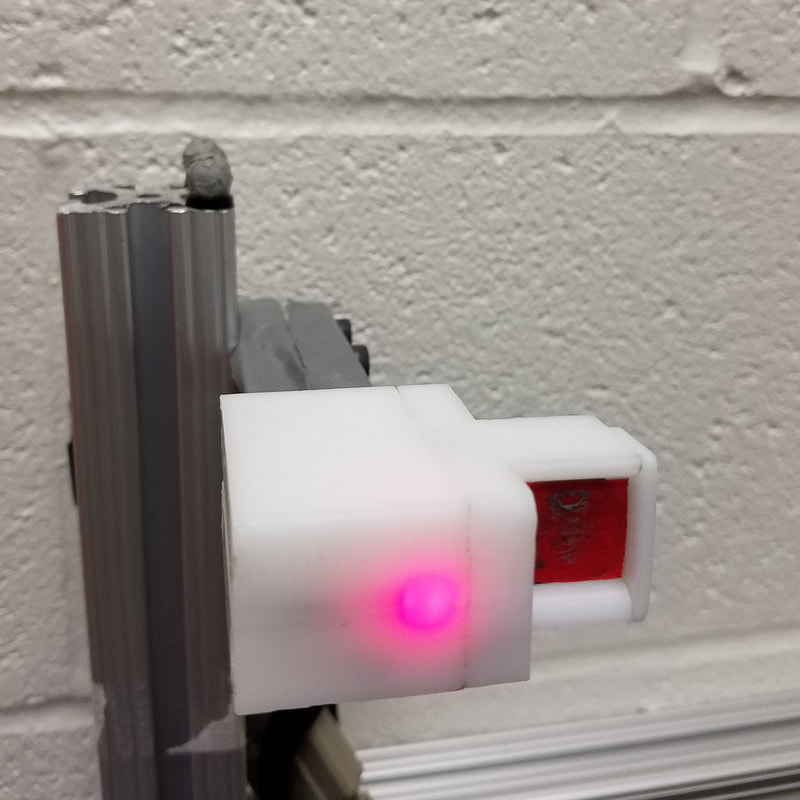

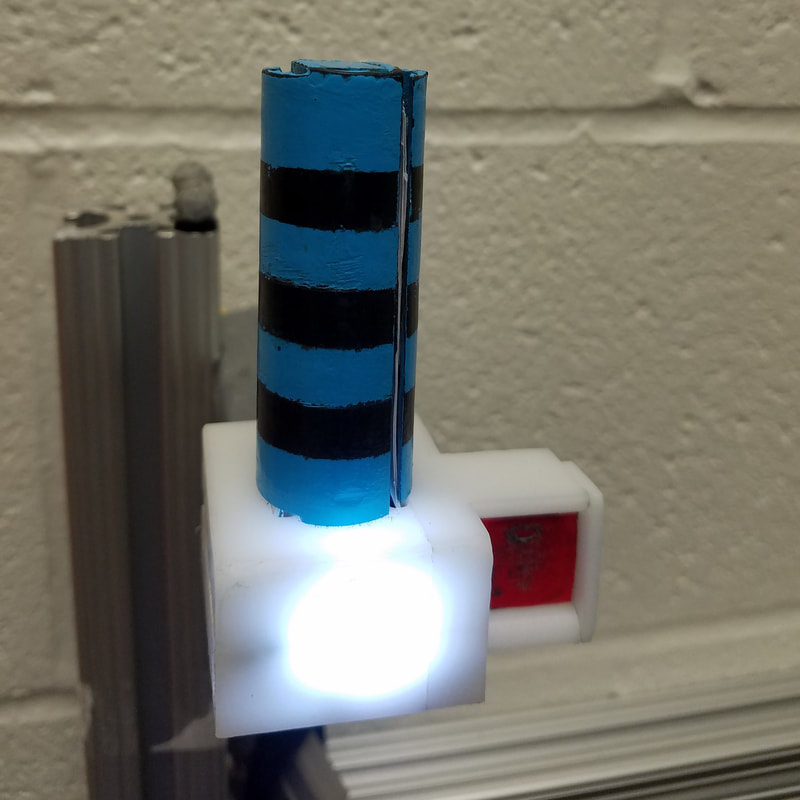

I crafted a series of objects with various affordances to explore if and how the primate motor system encodes object affordance knowledge, both grasp affordances and manipulation affordances. The objects were crafted with a CNC mill and a 3D printer, and contain integrated force sensors and LEDs.

Affordances are the actions that can be performed on or with an object. Thus affordances depend both on the properties of the object itself (it's shape and material) and on our own learned capabilities. Take for instance a plate. It affords grasping on the edge, and perhaps throwing like a frisbee. Now imagine that you learn how to spin the plate on your finger. Now the plate has a new affordance, that of spinning, though the plate itself didn't change!

I crafted a series of objects with various affordances to explore if and how the primate motor system encodes object affordance knowledge, both grasp affordances and manipulation affordances. The objects were crafted with a CNC mill and a 3D printer, and contain integrated force sensors and LEDs.

Grasp Affordances

These objects were presented in the same spot in front of the monkey for every trial, so movements to pinch on the "simple" object (middle) were nearly identical to pinch grips on the "compound" object (right). Likewise for the power grips on the striped portions of the objects. Thus, a classical understanding of motor cortex would suggest that the brain activity should be the same no matter which object is grasped. As it turns out, there is a modest but significant difference in activity that may indicate affordance encoding.

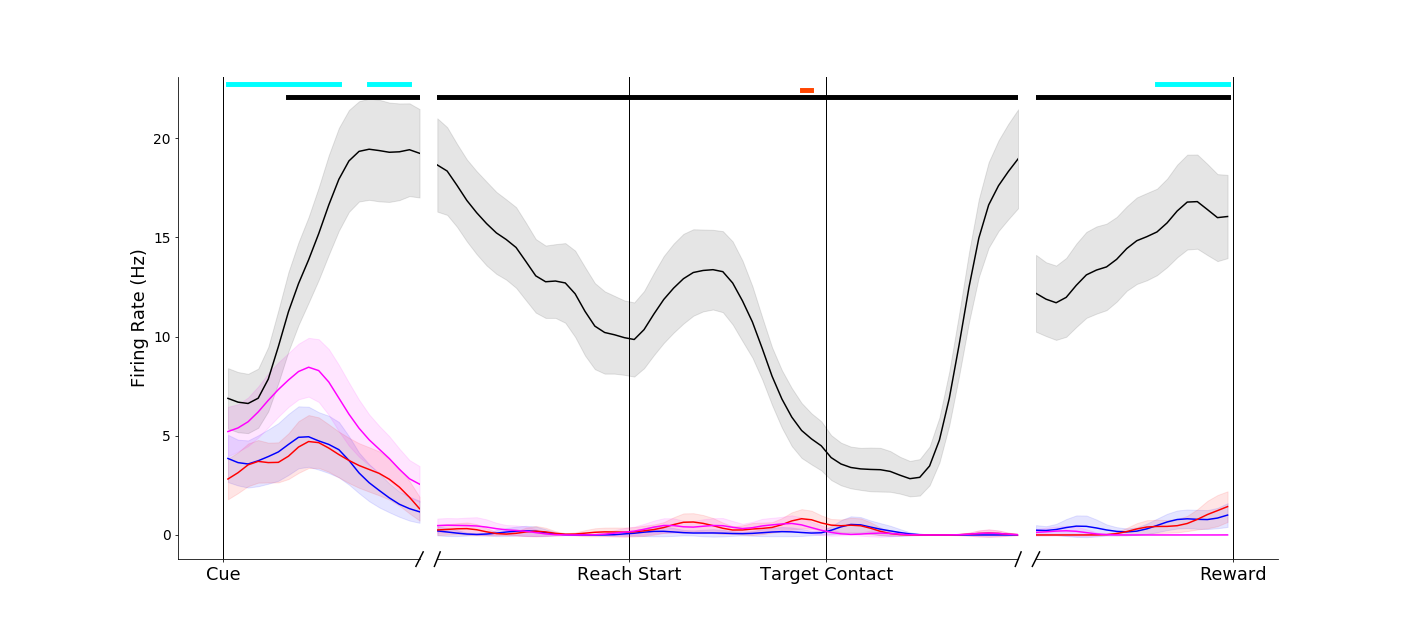

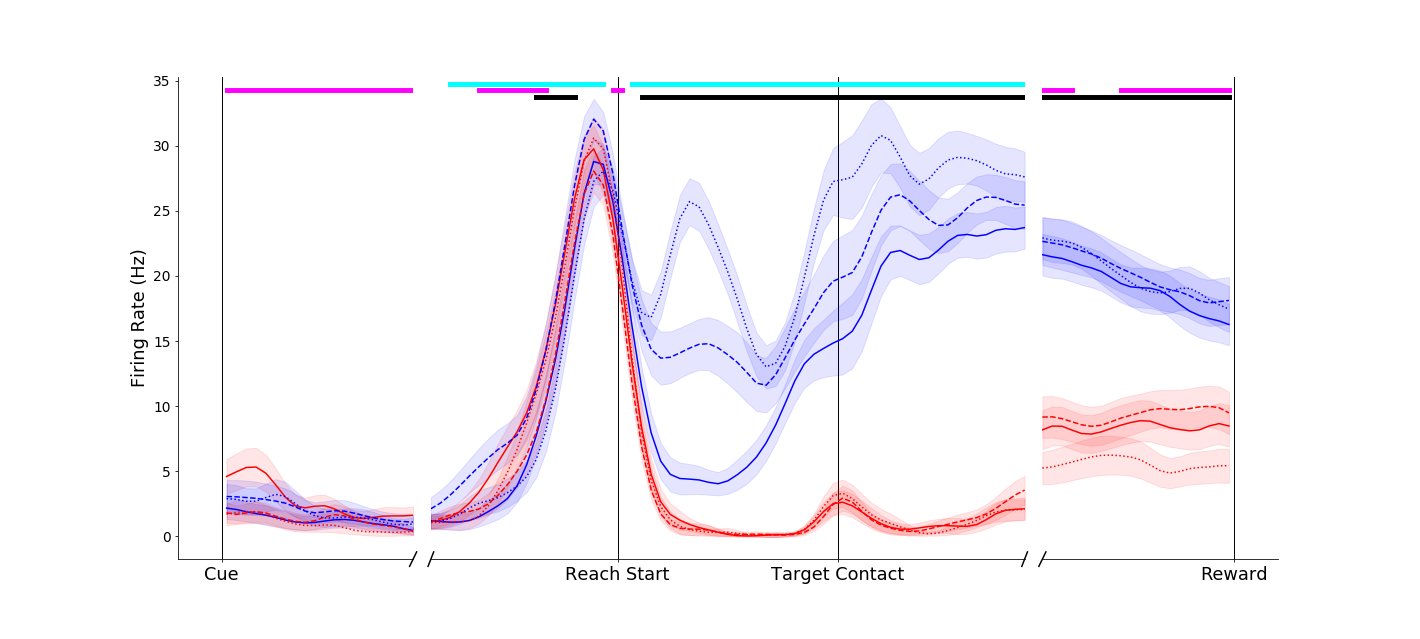

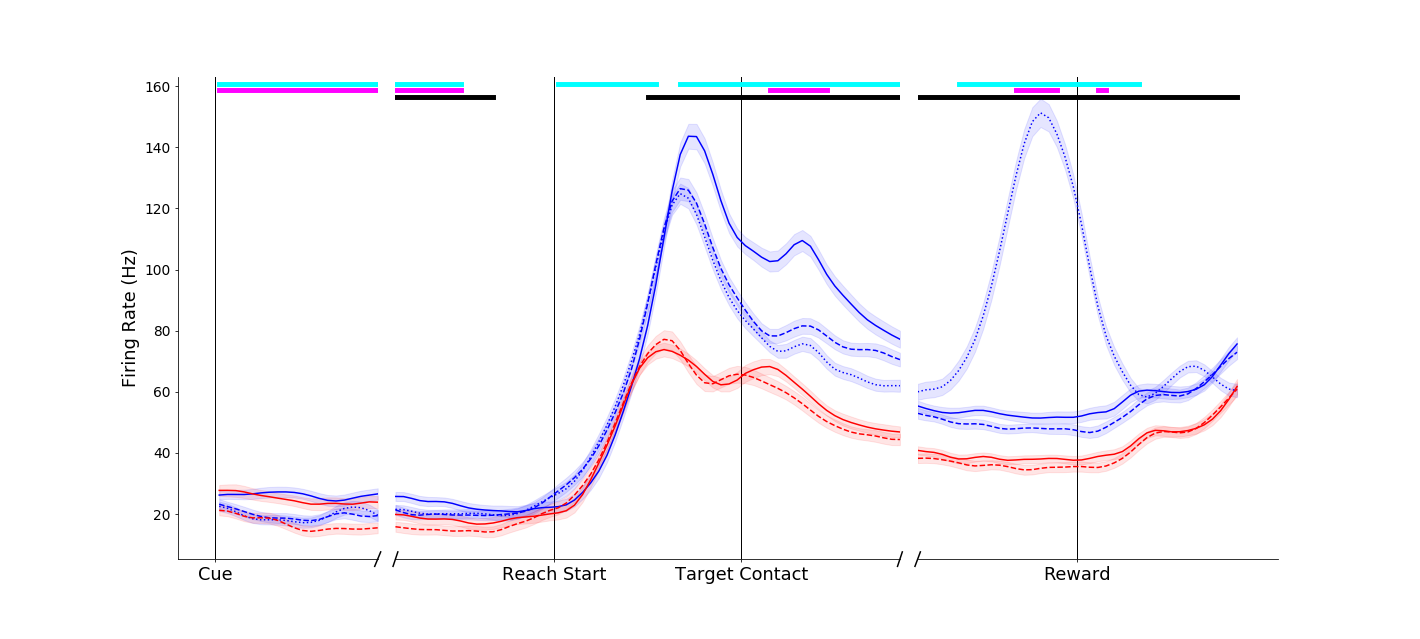

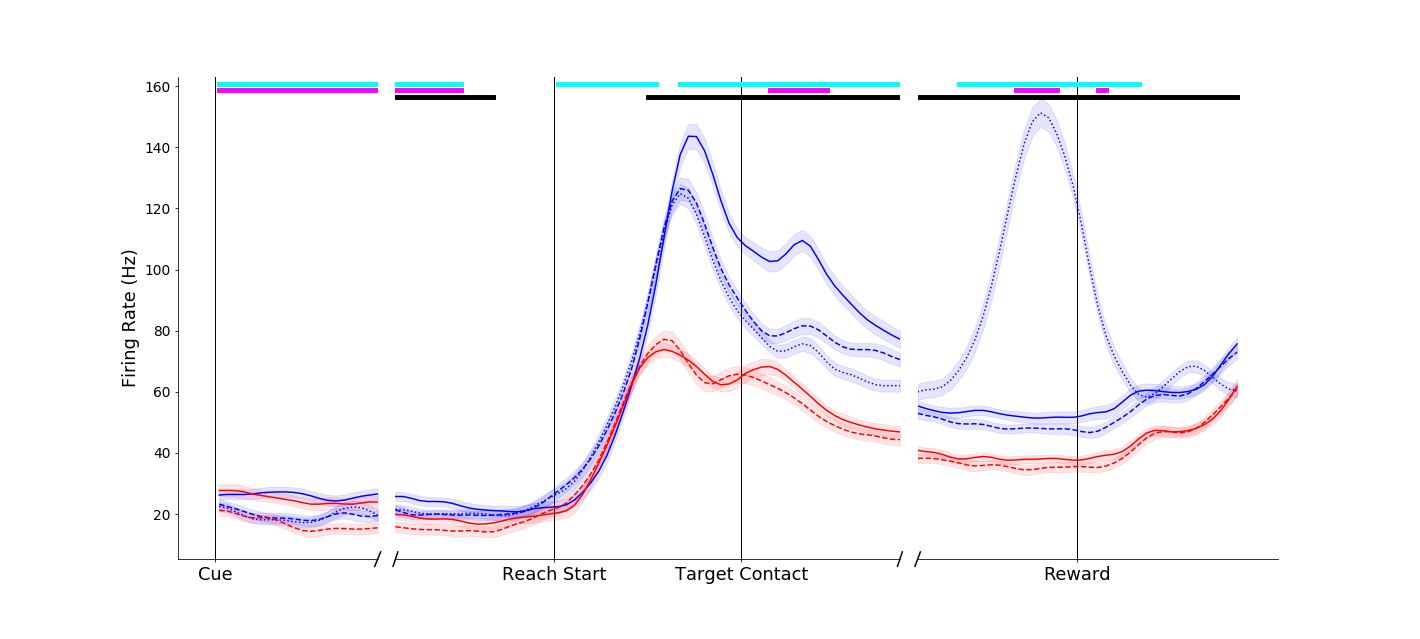

Effects of object grip affordances were apparent even in single neurons in primary motor cortex, ventral premotor cortex and anterior intraparietal cortex! To orient you for the plots below,

- Trial-averaged firing rates are plotted against time.

- The first vertical black line is when the reaching movement starts

- The second vertical black line on the x-axis is when the object is contacted

- Blue traces denote power grasp trials

- Red traces denote pinch grasp trials

- Solid traces denote grasps of simple objects

- Dashed traces denote grasps of the compound object

|

This neuron fired more vigorously for power grasps made on the compound object (dashed blue traces) than for the same movement made on the simple object (solid blue trace). Interestingly, this affordance-related signal was not present in the pinch grip conditions (red traces) until the grasp maintenance period.

|

|

This neuron showed a more complex response. An effect similar to that of the above neuron occurred around movement onset (blue traces separated at Reach start). However, another interesting effect can be observed before movement onset - the neuron appears to fire less in general when the compound object was to be grasped (dashed traces below solid traces before Reach start). Temporally and informationally complex responses like this were common.

|

These are only preliminary analyses, and just the first steps of understanding the implications of these data! I will have more detailed descriptions coming soon.

Manipulation Affordances

In our daily activities, we almost never simply grasp an object. The vast majority of our actions are to actually do something with the object after we have grasped it. Manipulation affordances are the set of possible manipulation actions we can take on an object after it is grasped. For instance, a certain door handle could afford pulling, but not pushing.

To investigate whether the frontoparietal motor areas of the brain encode this manipulation affordance knowledge, I constructed two more objects:

To investigate whether the frontoparietal motor areas of the brain encode this manipulation affordance knowledge, I constructed two more objects:

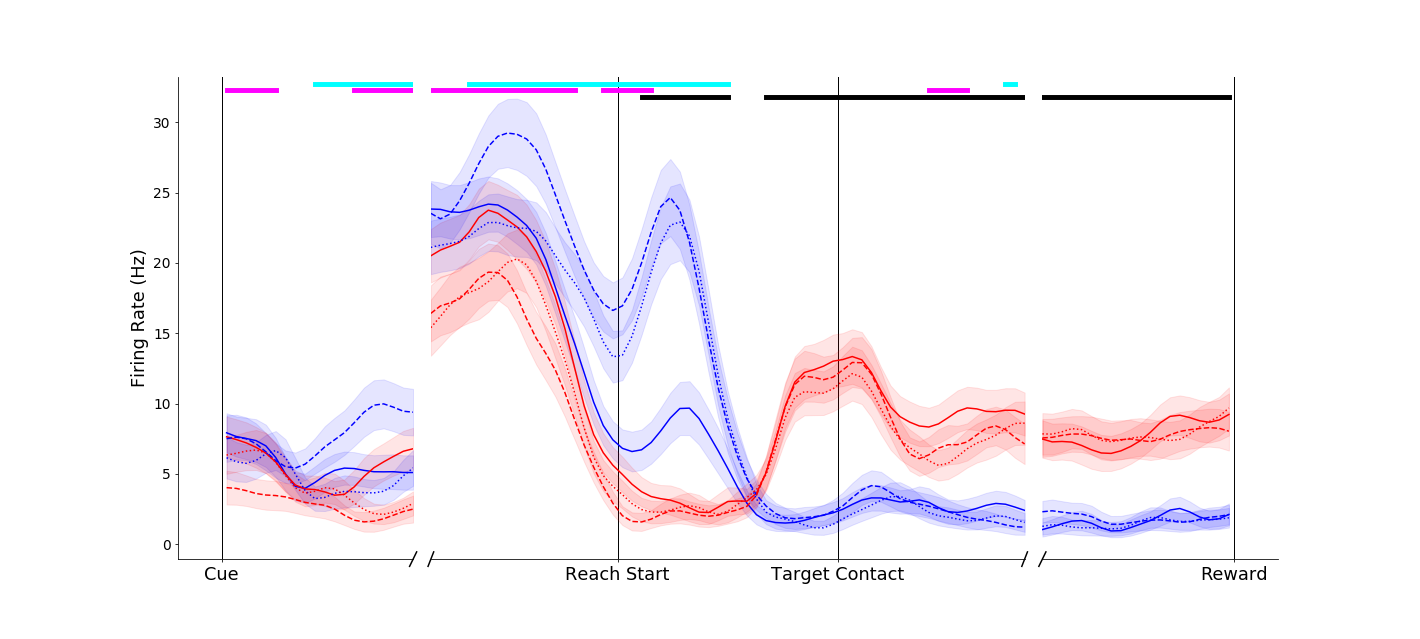

These objects were 3D printed and instrumented with force sensors. The black object was always bolted down and fixed in place, while the blue object could be either bolted down or released, allowing it to slide up and down on rails, in which case it gains the manipulation affordance of lifting. Both objects could be grasped with a power grip around the middle, or a pinch grip on the upper tab.

In order to study potential learning of manipulation affordances, I initially had the monkey grasp both objects when they were both bolted in place. In this scenario, the objects were functionally exactly the same and differed only in color. Then, after many practice sessions, I released the green object and had the monkey practice lifting it, learning that the green object now afforded lifting. Then, I had the monkey simply grasp both objects without lifting.

In order to study potential learning of manipulation affordances, I initially had the monkey grasp both objects when they were both bolted in place. In this scenario, the objects were functionally exactly the same and differed only in color. Then, after many practice sessions, I released the green object and had the monkey practice lifting it, learning that the green object now afforded lifting. Then, I had the monkey simply grasp both objects without lifting.

In these plots, solid lines were grasps made on the black, fixed object, and dashed lines were grasps made on the movable object. Blue lines were power grip and red lines are pinches. The plot on the left was taken from a session during which both objects were fixed in place. The plot on the right was from a day after the monkey learned that the blue object could be lifted; the black line in the right-hand plot is when the monkey grasped and then actually lifted the movable object.

As we can see, this particular neuron did not initially distinguish between the two objects when grasping them with a power grip. But after learning, this neuron now shows distinct activity for each object (blue solid and dashed lines are separated, right hand plot) even when the objects were just grasped in the same way and no lift occurred!

As we can see, this particular neuron did not initially distinguish between the two objects when grasping them with a power grip. But after learning, this neuron now shows distinct activity for each object (blue solid and dashed lines are separated, right hand plot) even when the objects were just grasped in the same way and no lift occurred!

|

The manipulation affordance effects could be quite dramatic, even in primary motor cortex. This neuron fires much more for the mobile object (dashed lines), even though the movements are the same as those made on the fixed object (solid lines). Such effects were never seen in the pre-learning sessions.

|

It must be noted that these different activity patterns are not signatures of manipulation movement preparation, as the dashed blue traces indicate trials when the mobile object was simply grasped and NOT lifted; the monkey knew that no lifting was required on those trials. Thus, we can conclude that these distinctions actually entail manipulation affordance knowledge encoding in these brain areas!

These plots are first-glance preliminary looks at these data. I am still actively analyzing, and will have more results soon! I'll also be submitting this work for publication. I presented some related work at the Society for Neuroscience Meeting in 2018.

These plots are first-glance preliminary looks at these data. I am still actively analyzing, and will have more results soon! I'll also be submitting this work for publication. I presented some related work at the Society for Neuroscience Meeting in 2018.

Electrode Array Implant Guidance System

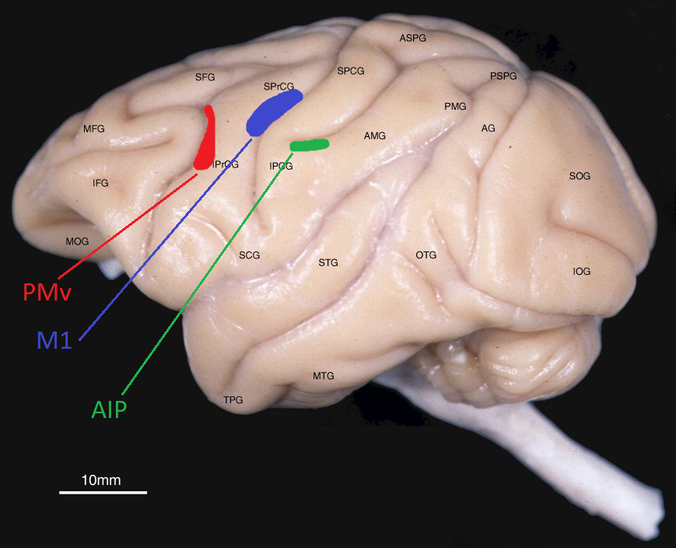

In order to perform the three studies described above, we needed to simultaneously record populations of neurons in three brain areas known to be related to grasping activity: primary motor cortex (M1), ventral premotor cortex (PMv), and anterior intraparietal area (AIP). To do so, we would have to implant chronic electrodes into each of these areas. Just one problem stood in our way: no one in the Motorlab had ever implanted electrode arrays in PMv or AIP. To make things even harder, PMv and AIP are mostly located deep within the sulcus - the folds - of the brain.

I brought my mechanical engineering background to bear on this problem, and created an imaging-guided insertion guidance system for use during the electrode implant neurosurgery, to ensure that we would get successful recordings from these areas. Here's how I did it.

First, here's a photo of the three brain areas we wanted to implant, on a monkey brain.

I brought my mechanical engineering background to bear on this problem, and created an imaging-guided insertion guidance system for use during the electrode implant neurosurgery, to ensure that we would get successful recordings from these areas. Here's how I did it.

First, here's a photo of the three brain areas we wanted to implant, on a monkey brain.

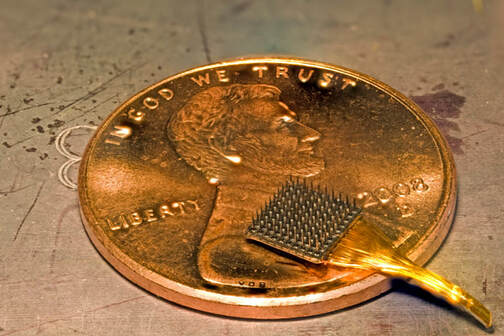

First, we had to pick which arrays to use. We wanted to go with reliable solutions. From previous experience, we knew that the Utah array from Blackrock was a good choice for implantation in M1. Through discussion with colleagues, we decided to use the longer Floating Microelectrode Arrays from Microprobes for PMv and AIP.

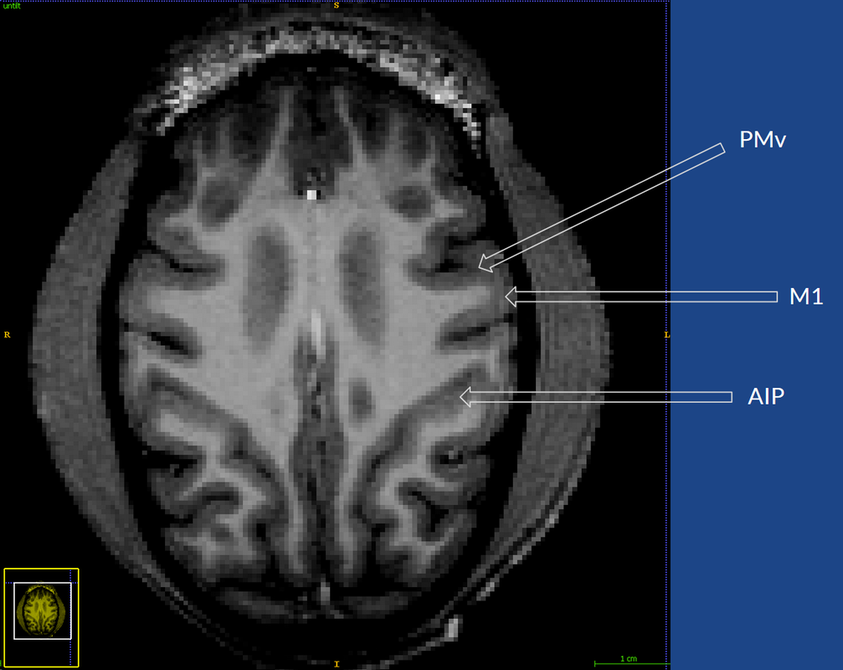

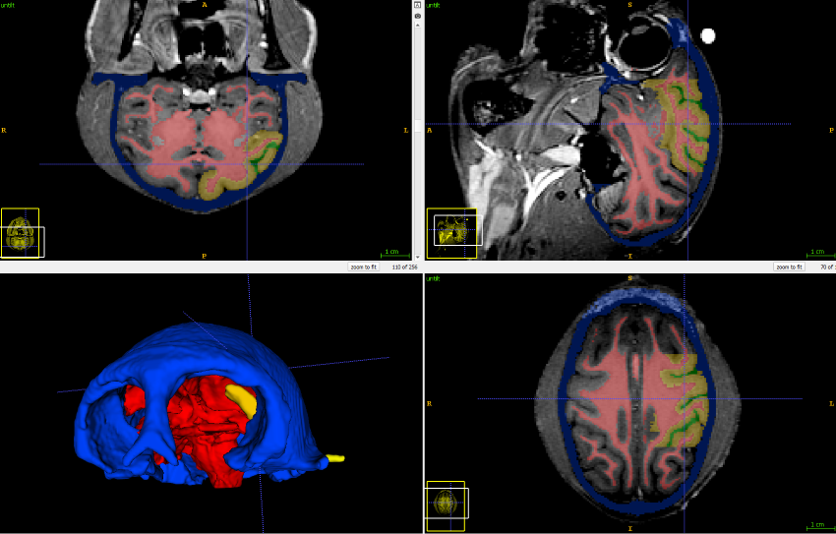

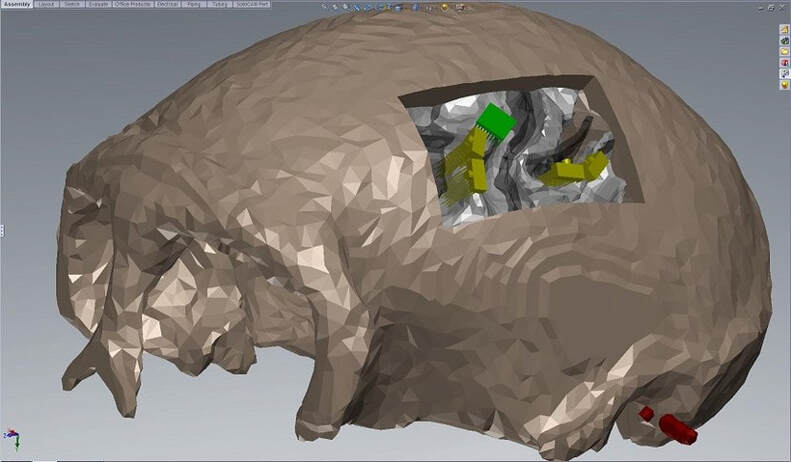

The first step was to take an MRI of the monkey's brain, to see what we were working with:

Next, the MRI image was segmented to separate the different tissues. Here, the skull is colored blue, the white matter tracts are colored red, the cortex is colored yellow and the intrasulcal space is colored green.

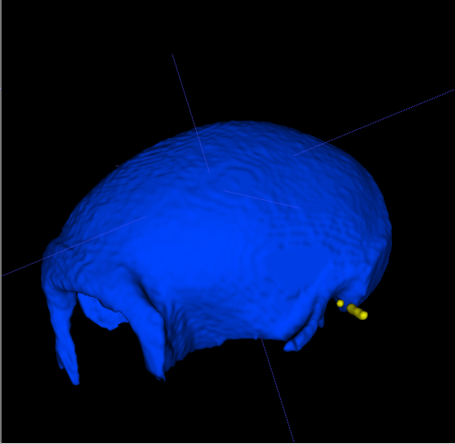

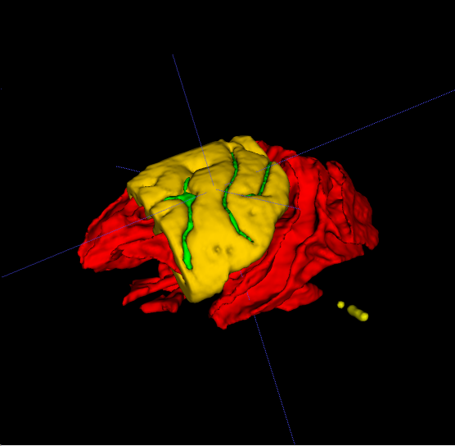

The segmentation software lets us convert these regions to 3D surfaces

I imported these surfaces into SolidWorks,

where I also modeled the microelectrode arrays.

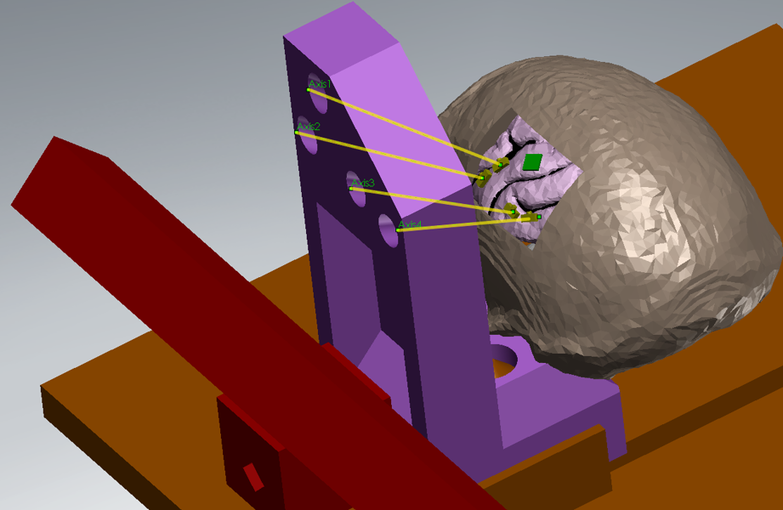

Now we had a good virtual model of where the arrays should go. But we need a way to physically implant them in these locations during surgery. To do this, I modeled the stereotaxic head mounting frame that we would use during surgery. Then I designed a new physical piece to line up the arrays for insertion.

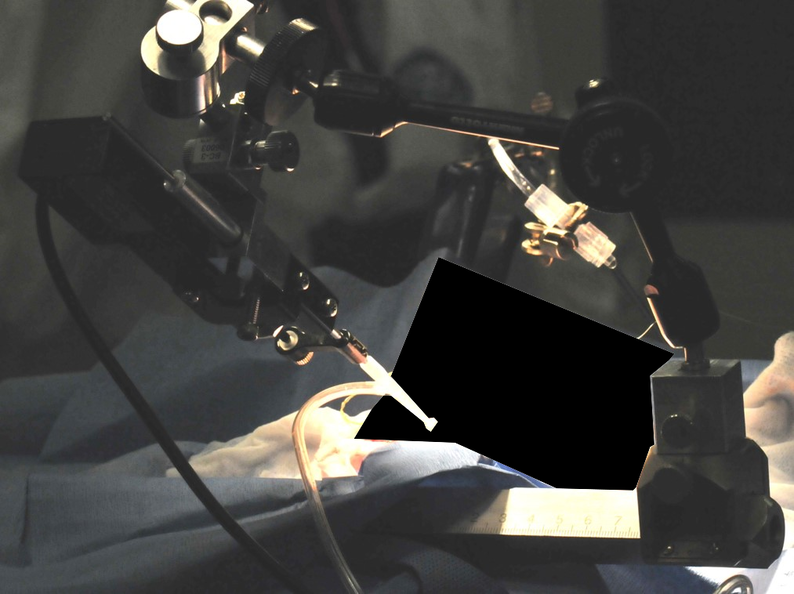

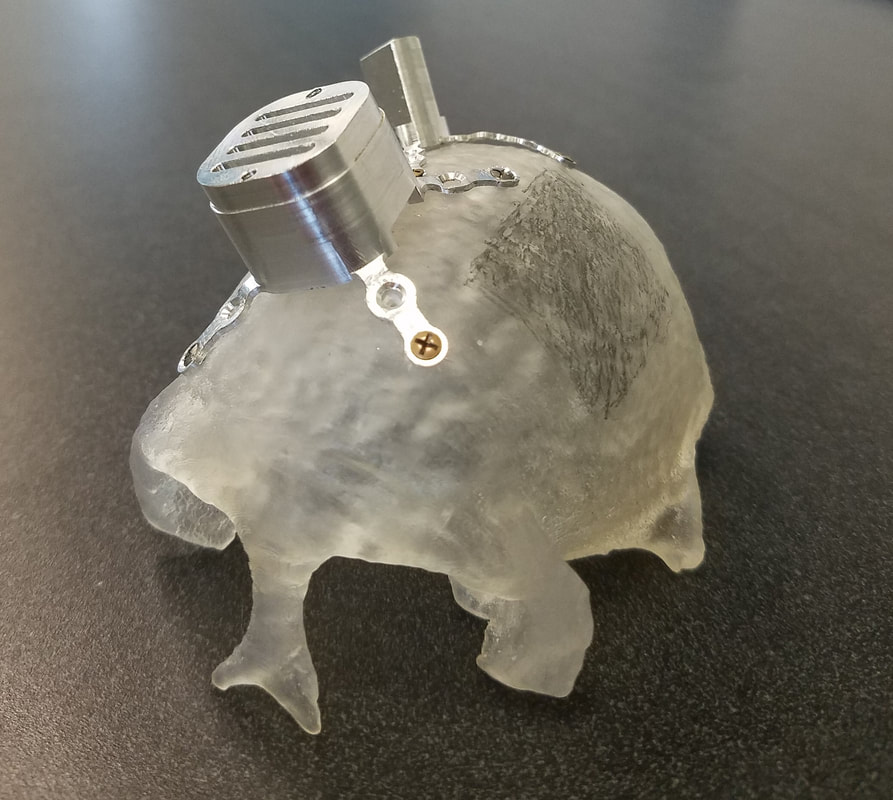

I then fabricated this aligner using a Form 1+ SLS 3D printer. We were able to use the aligner during surgery to configure the manipulator arm to line up with each array:

We could then use this configured manipulator arm to hold the vacuum-tip microdrive, which holds the arrays and advances them into the cortex.

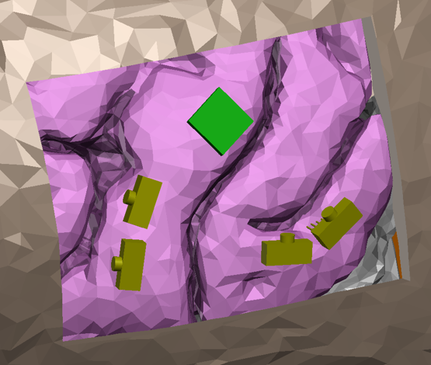

This allowed us to rapidly and accurately insert each array into the planned locations. Here's a trace of a photo of the actual arrays in place (left), compared with the planned versions (right):

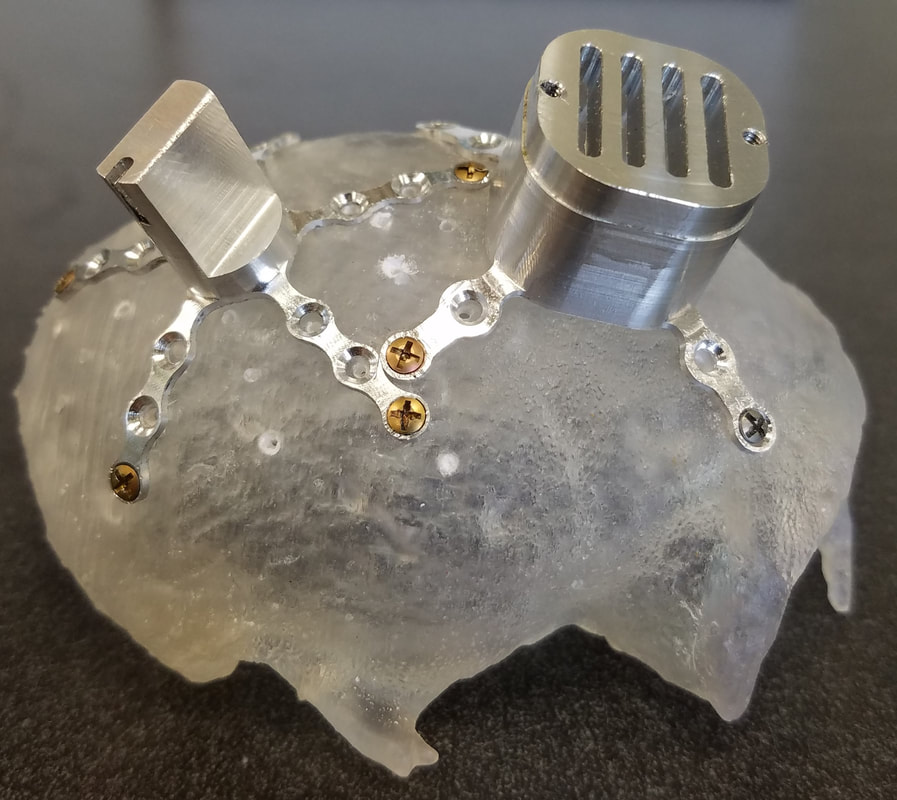

Implanting so many arrays also brought the challenge of housing the array connectors. The Utah array came assembled with a pre-made titanium skull-mounted pedestal to house it's connector, but a similar solution to house four FMA arrays did not yet exist. In order to house the FMA connectors in an efficient and stable package, I designed and milled a titanium pedestal that would be mounted directly to the skull:

Here is the final assembly of the four FMAs, with connectors integrated into the pedestal:

I also used the 3D surface mesh generated from the MRI data to 3D print the monkey's skull. This allowed us to plan out where the pedestals would be mounted in relation to the craniotomy, and was used during surgery as a visual guide for where to make the scalp incisions. Here, the printed skull is shown with mock pedestals mounted in the desired configuration.

Through this multi-step procedure, we were able to successfully chronically record 224 channels simultaneously from 5 arrays in 3 different brain areas. This allows us to probe how these areas encode information about objects within our reach or object affordance knowledge (see the two projects above).

I will also be submitting this work for publication in a methods journal.

Contextual Object Information in M1

Owing to the work of Apostolos Georgopoulos (my intellectual grandfather!) in the 1980s, the field of motor neuroscience, and eventually brain-machine interfaces, has progressed largely on the back of the idea that neurons in primary motor cortex (M1) are directionally cosine tuned. This means that each neuron has a "preferred direction" vector in space. Each neuron fires maximally when the subject makes a reaching movement along that preferred direction. When a reach is made in another direction, the neuron doesn't shut down completely - it simply fires at a lower rate, proportional to the cosine of the angular difference between the neuron's preferred direction vector and the actual reach vector. This is equivalent to linear firing rate tuning to velocity.

These basic linear models have been the driving force behind the early neural prosthetic decoders - when a population of neurons is recorded, and each of their preferred directions is known, the intended reach direction can be statistically inferred.

Though these models have worked well to describe brain activity during simple 3D reaching movements, it is currently unclear whether such a model can be extended to the complex high-dimensional movements that occur during object grasp and interaction. For example, recent 10D control of a robotic arm at Pitt faced significant challenges when using such models to try to control a more dextrous robotic hand to interact with objects.

I set out to answer the question: when interacting with real objects, does a single, fixed linear tuning model suffice to explain neural activity in M1? Or is there some additional contextual, object-related information in the neural code, and how might this interact with movement and muscle activity tuning?

For this project, I analyzed data that had previously been collected at the Motorlab by Dr. Sagi Perel for his doctoral dissertation. He trained monkeys to grasp six different objects while acutely recording single neurons in M1, hand and arm movements and muscle activity with implanted EMG sensors.

These basic linear models have been the driving force behind the early neural prosthetic decoders - when a population of neurons is recorded, and each of their preferred directions is known, the intended reach direction can be statistically inferred.

Though these models have worked well to describe brain activity during simple 3D reaching movements, it is currently unclear whether such a model can be extended to the complex high-dimensional movements that occur during object grasp and interaction. For example, recent 10D control of a robotic arm at Pitt faced significant challenges when using such models to try to control a more dextrous robotic hand to interact with objects.

I set out to answer the question: when interacting with real objects, does a single, fixed linear tuning model suffice to explain neural activity in M1? Or is there some additional contextual, object-related information in the neural code, and how might this interact with movement and muscle activity tuning?

For this project, I analyzed data that had previously been collected at the Motorlab by Dr. Sagi Perel for his doctoral dissertation. He trained monkeys to grasp six different objects while acutely recording single neurons in M1, hand and arm movements and muscle activity with implanted EMG sensors.

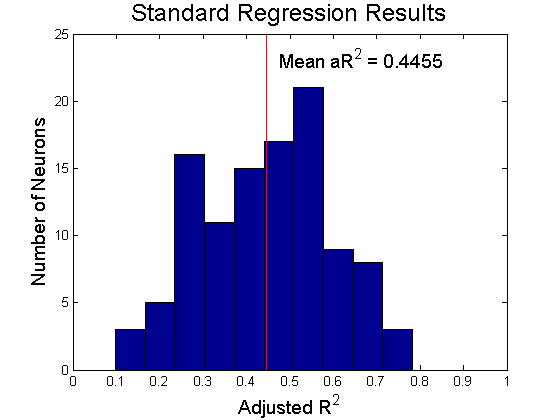

I then looked to the residuals of this linear model fit. The residuals essentially describe how the actual firing rate differs from that predicted by the linear model. If the model predicts a low firing rate, and the actual firing rate is high, you get a large positive residual.

If the neurons truly followed a linear tuning scheme and were only tuned to kinematics and EMG, the distribution of residuals should be completely random, regardless of the context in which the movement was made.

However, One-way ANOVA on residuals, grouped by object, revealed that 107 out of 108 neurons that were recorded showed significantly different residuals for reaches to different objects. That is, there was systematic object-context related structure in the firing rates beyond what was accounted for by linear tuning to kinematics and EMG.

If the neurons truly followed a linear tuning scheme and were only tuned to kinematics and EMG, the distribution of residuals should be completely random, regardless of the context in which the movement was made.

However, One-way ANOVA on residuals, grouped by object, revealed that 107 out of 108 neurons that were recorded showed significantly different residuals for reaches to different objects. That is, there was systematic object-context related structure in the firing rates beyond what was accounted for by linear tuning to kinematics and EMG.

|

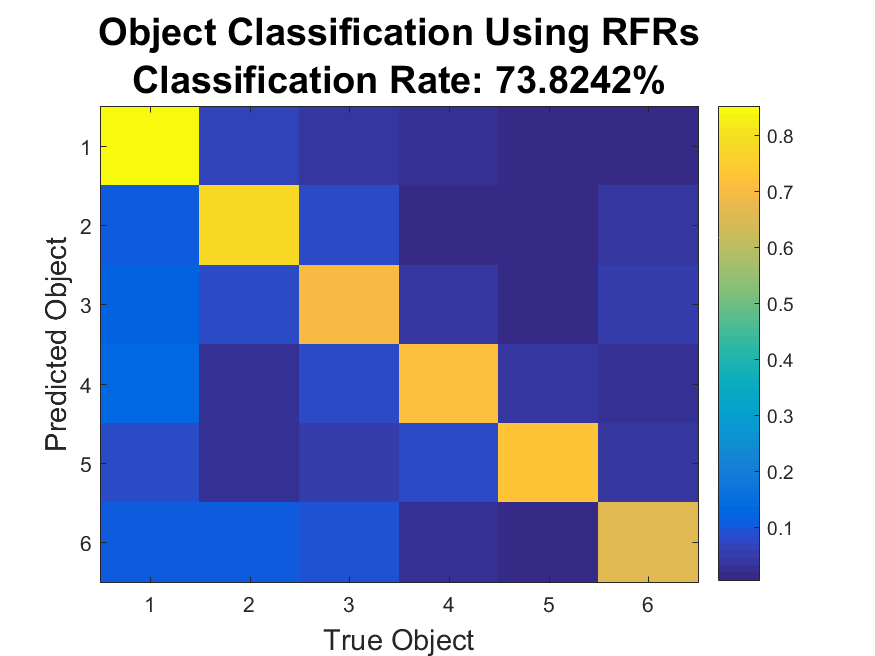

This structure was also revealed through classification: a Naive Bayes' Classifier was able to was able to detect which object was being reached to at a 73.8% success rate (chance level 16.7%).

This indicates that there is some object-related information in the firing rates beyond what would be expected from linear tuning alone. |

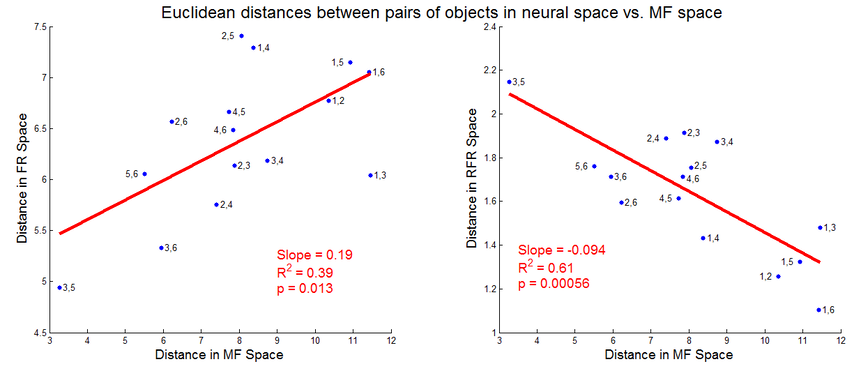

What was the meaning of this additional structure in the residual firing rates? To dig deeper, I calculated the distances between pairs of objects in both movement feature (kinematics and EMG) space and in neural space. If two objects are far apart in movement feature space, that means they require much different hand movements to grasp. Likewise, if two objects are far apart in neural space, that means that the firing rate patterns for grasps on those objects are dissimilar.

If M1 neurons are indeed proportionally tuned to movement features, we should see an increase in neural space distance with an increase in movement feature space distance. We do indeed see such an effect, as in the left-hand plot below. However, looking at an analogous plot of the residual firing rates reveals a strikingly different effect. The right-hand plot shows that the most dissimilar residual firing rates occur when reaching to the objects that require the most similar movements and muscle activity. That is, the structure in the residuals may function to separate the representations of very similar grasps on similar yet distinct objects!

If M1 neurons are indeed proportionally tuned to movement features, we should see an increase in neural space distance with an increase in movement feature space distance. We do indeed see such an effect, as in the left-hand plot below. However, looking at an analogous plot of the residual firing rates reveals a strikingly different effect. The right-hand plot shows that the most dissimilar residual firing rates occur when reaching to the objects that require the most similar movements and muscle activity. That is, the structure in the residuals may function to separate the representations of very similar grasps on similar yet distinct objects!

This result also implies that the structure in the residuals is not due to smooth nonlinear tuning to kinematics and EMG; if that was so, objects requiring very similar kinematics would still evoke very similar neural activity, and thus the residual firing rates should lie close together. If smooth nonlinear tuning was indeed at play here, the right-hand plot should still have a positive slope.

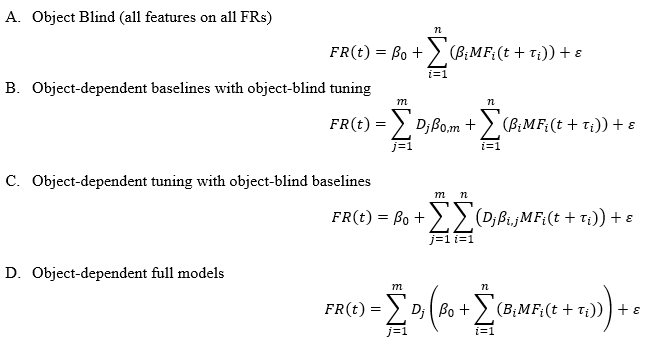

I then proposed and tested three alternative, more complex models. These models allowed:

A. The original fixed, linear tuning to movement features (object-blind tuning to movement features)

B. Direct, constant increases in firing for different objects.

C. Interaction between object identity and movement tuning (object-dependent tuning to movement features)

D. Both direct and interactive object effects.

A. The original fixed, linear tuning to movement features (object-blind tuning to movement features)

B. Direct, constant increases in firing for different objects.

C. Interaction between object identity and movement tuning (object-dependent tuning to movement features)

D. Both direct and interactive object effects.

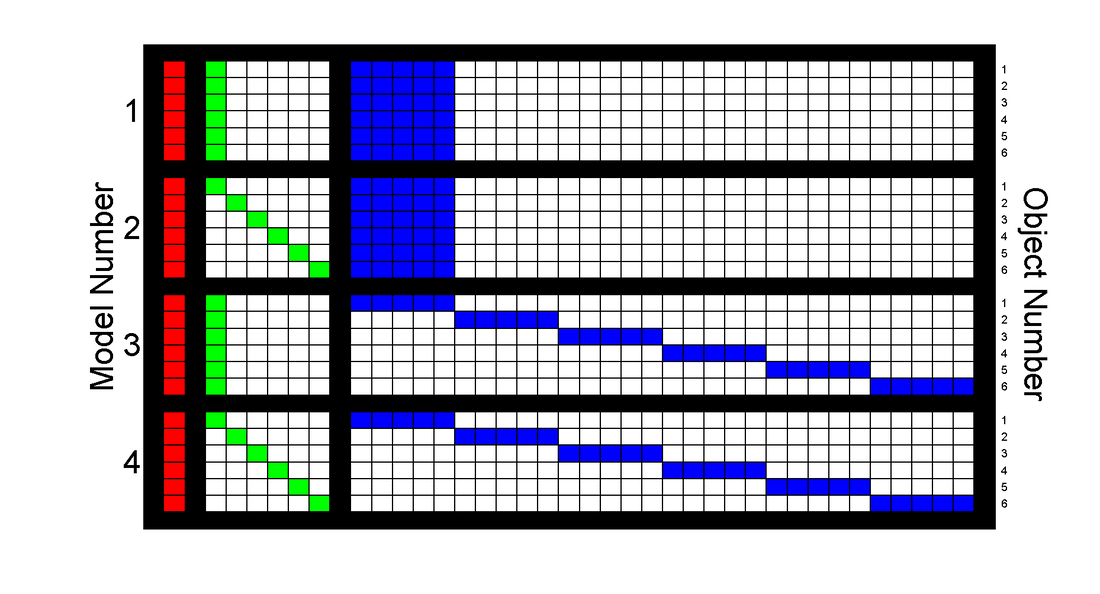

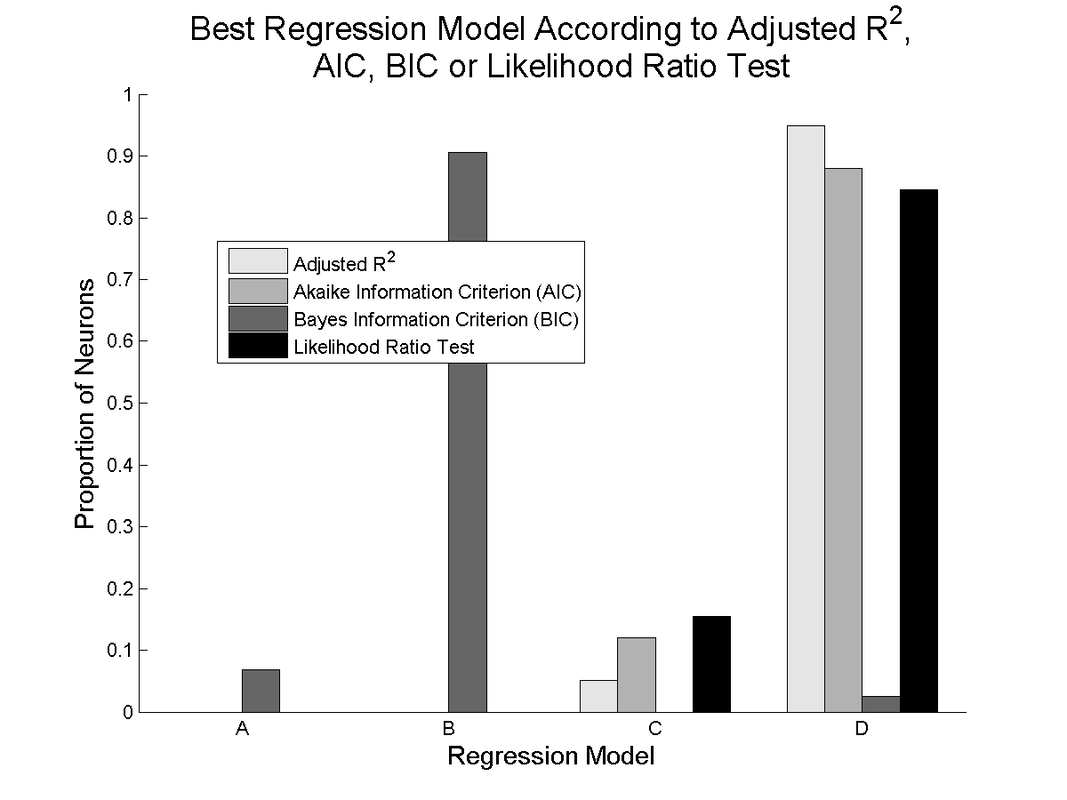

|

Viewing the adjusted R^2 for each model, we see evidence of modest increases in model performance when adding direct object coding (model B vs model A), and a much larger boost when adding object-dependent tuning parameters (Model C vs A and B). A very modest increase can be seen with the fully complex model D.

|

|

Other model selection criteria show general agreement with the adjusted R^2, except for the Bayes' Information Criterion, which most often selects model B, the fixed movement tuning model with direct object encoding. This is likely due to the much heavier penalization for additional free parameters in the calculation of the BIC.

|

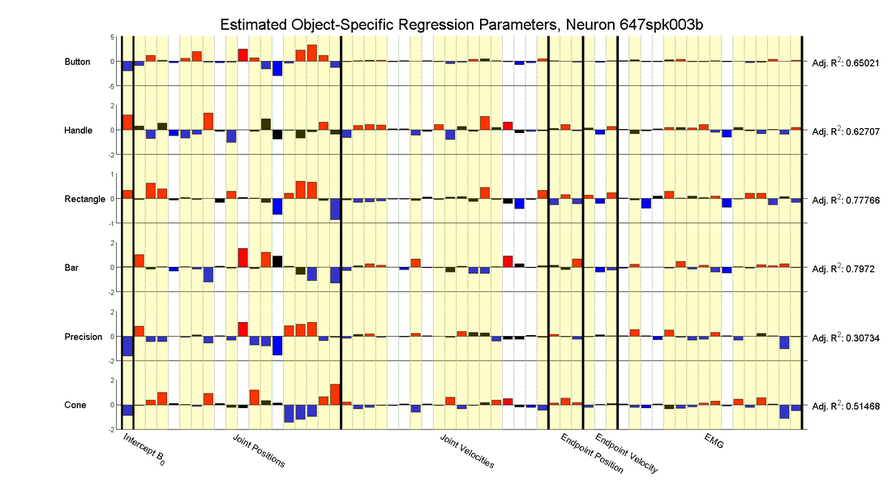

The tendency for neurons to show apparently different tuning to movements depending on which object is grasped is readily apparent when looking at a single neuron and building separate tuning model for each object:

As can be seen, individual tuning parameters often switch signs between these models, and even the adjusted R^2 values can change drastically depending which object is being grasped.

All of these results together imply that a single, fixed linear proportional tuning model relating firing rates to movement features may not be the whole story when interacting with physical objects. The limits of this behavioral task (it was not designed to directly answer this particular question), somewhat small sample sizes, non-simultaneity of neural recordings and the risk of over-fitting due to inclusion of so many model parameters meant that these results could not be definitively conclusive, but they were suggestive enough to warrant further investigation, which inspired my thesis experiments (see Cortical Encoding of Object Presence and Cortical Encoding and Learning of Object Affordances).

I presented work related to this project at the Society for Neuroscience Meeting in 2014 and at Cosyne in 2016.

Engineered Learning for

Robotic Neural Prosthetic Control

In the last decade, there have been great advances in the field of brain-controlled robotic neural prosthetics. These devices have the potential to restore movement and functionality for people with disabilities such as spinal cord injury. Take for example this video from Jen Collinger's Lab here at Pitt, where the subject was able to eat a chocolate bar on her own for the first time since becoming paralyzed:

This incredible result came only after many ad hoc adjustments to the signal processing, decoding algorithm and robot, and many hours of practice by the subject herself. For this technology to become a clinical solution, we must find a way to train the user to control the device without having teams of scientists on hand to assist.

So, with this project, I set out to design a systematic, quantifiable, repeatable way to train a naive neural prosthetic user from beginner to expert. To test the system, I trained monkeys to control a Barrett WAM robotic arm.

The first step was to get the monkey interested in doing the task. Previous work in our lab had the monkeys controlling a robot arm to match a target far out in the workspace. This is a somewhat abstract task for a monkey to understand. We wanted to make the task more naturalistic and so we designed the entire task and system to mimic natural self-feeding behavior. To do this we built an object with an integrated drink tube, which the monkey would pull to its mouth to get a reward. This object was presented via an industrial DENSO robot. This video shows the task in action:

So, with this project, I set out to design a systematic, quantifiable, repeatable way to train a naive neural prosthetic user from beginner to expert. To test the system, I trained monkeys to control a Barrett WAM robotic arm.

The first step was to get the monkey interested in doing the task. Previous work in our lab had the monkeys controlling a robot arm to match a target far out in the workspace. This is a somewhat abstract task for a monkey to understand. We wanted to make the task more naturalistic and so we designed the entire task and system to mimic natural self-feeding behavior. To do this we built an object with an integrated drink tube, which the monkey would pull to its mouth to get a reward. This object was presented via an industrial DENSO robot. This video shows the task in action:

In this video, the task runs automatically with no subject present. On a real experiment day, the monkey sits in the top left. The monkey has 3D control of the robot in the top of the screen via an optimal linear estimator decoder, operating on the neural signals extracted from primary motor cortex via a Utah array. The orange drink-tube object is presented at various spatial locations and the monkey has to move the hand towards the object. Once he gets within a certain radius of the object, the computer takes over control of the arm and completes the grasp, then brings the object to the monkey to deliver the reward. This keeps the task safe for the subject.

This task is useful because it has an easily scalable difficulty curve - we can make the task easier or harder by making the virtual target radius bigger or smaller. Thus we can start out easy and ramp up the difficulty as the subject learns.

Typically, researchers arbitrarily choose a difficulty setting and adjust it by hand to try to match the subject's performance. We wanted a more systematic method. Thus we came up with a way to estimate the monkey's current skill level. To do so, we ran many thousands of simulated experiments, in which the monkey was replaced by a random walk controller combined with some level of correct input that pushes them directly toward the target.

Typically, researchers arbitrarily choose a difficulty setting and adjust it by hand to try to match the subject's performance. We wanted a more systematic method. Thus we came up with a way to estimate the monkey's current skill level. To do so, we ran many thousands of simulated experiments, in which the monkey was replaced by a random walk controller combined with some level of correct input that pushes them directly toward the target.

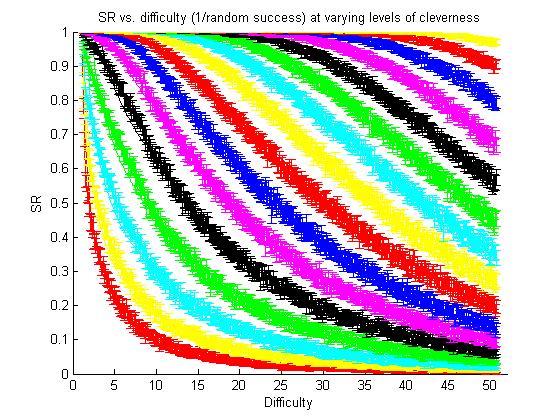

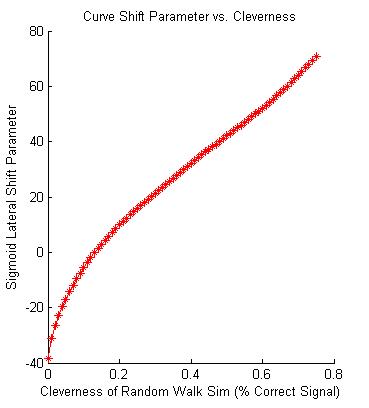

|

The result of the simulated experiments with the "virtual clever monkey". Each color curve shows a simulated controller with a different level of cleverness. The red curve on the bottom left shows the performance of a completely random walk controller.

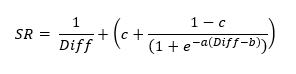

We defined difficulty as 1/(random walk success rate). And thus the left-hand red curve can be fit by SR = 1/Diff |

Through lots of curve fitting attempts, I was able to find a single equation that could describe each of these curves accurately:

I then was able to find a mathematical relation between the parameters a, b and c, allowing us to reduce the equation relating success rate and difficult down to a single parameter, B, which we call 'skill'.

This allowed us to characterize the monkey's performance with a single parameter. We could estimate this parameter using the equation above. In order to do so, we had to probe his performance at a variety of difficulties, which we accomplished by making 25% of the trials a catch trial. We chose the difficulties to sample using an algorithm that would take the previous estimation of skill and the history of catch trials, and pick a new catch trial difficulty at the point on the curve that would yield the most information about the skill parameter estimate.

Through this method we were able to estimate the monkey's skill on-line, and adjust the difficulty appropriately. We chose to set the normal trials to a difficulty at which we predicted the monkey would have a 70% success rate. We posited this would make for solid learning, as enough error information would be present to inform the monkey on how to improve, but the success rate would be high enough to keep the monkey engaged in the task. This notion is backed up by other research about the "sweet spot" difficulty for learning.

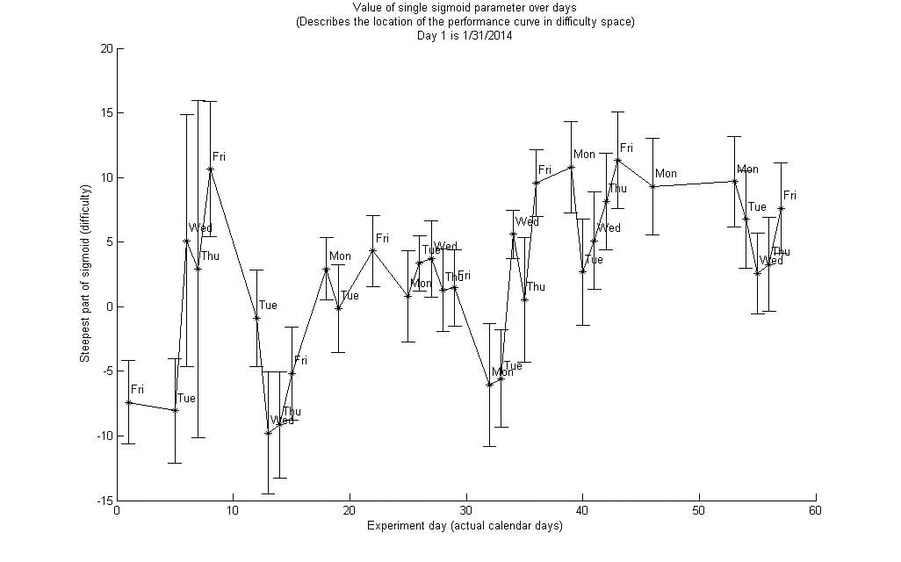

This adaptive difficulty scheme was somewhat successful in training the subject, as can be seen by the plot below, which tracked the monkey's estimated skill over 60 days.

Through this method we were able to estimate the monkey's skill on-line, and adjust the difficulty appropriately. We chose to set the normal trials to a difficulty at which we predicted the monkey would have a 70% success rate. We posited this would make for solid learning, as enough error information would be present to inform the monkey on how to improve, but the success rate would be high enough to keep the monkey engaged in the task. This notion is backed up by other research about the "sweet spot" difficulty for learning.

This adaptive difficulty scheme was somewhat successful in training the subject, as can be seen by the plot below, which tracked the monkey's estimated skill over 60 days.

This method also allowed us to track within-session learning.

This method was designed to be easily extensible to neural prosthetic control of arbitrary dimensions, with any task in which difficulty can be systematically modulated and performance can in some way be simulated. Thus it could lay the foundation for a clinical, take-home training program for new neural prosthetic users.

Unfortunately frequent mechanical failures of the robot meant that we could not get good quality consistent long-term data for this project. We were still able to present some of this work at the Society for Neuroscience Meeting in 2014 and at a Center for the Neural Basis of Cognition Brain Bag Seminar.

This method was designed to be easily extensible to neural prosthetic control of arbitrary dimensions, with any task in which difficulty can be systematically modulated and performance can in some way be simulated. Thus it could lay the foundation for a clinical, take-home training program for new neural prosthetic users.

Unfortunately frequent mechanical failures of the robot meant that we could not get good quality consistent long-term data for this project. We were still able to present some of this work at the Society for Neuroscience Meeting in 2014 and at a Center for the Neural Basis of Cognition Brain Bag Seminar.

Neural Signatures of Long Timescale Learning in a Brain-Computer Interface

Coming soon! But see our paper in the Journal of Neurophysiology!